OpenClaw System Requirements 2026: Minimum and Recommended Hardware

Minimum Specs for Basic Local Testing

The landscape of autonomous AI agents has shifted dramatically, making the hardware discussion more critical than ever. For developers initiating basic local testing, the entry barrier remains relatively accessible. The baseline setup requires at least an 8-core processor and 16GB of RAM to handle sequential command line interface executions without severe bottlenecking. This configuration allows individual programmers to test basic logic flows and API call routing before moving into heavier, concurrent scenarios.

Recommended Specs for Continuous Enterprise Workloads

When transitioning from a sandbox environment to continuous enterprise operations, hardware requirements scale exponentially. Production environments demand aggressive multi-core processing capabilities and massive memory bandwidth to sustain uninterrupted data streams. Recommended setups require dual-socket architectures and advanced error-correcting code (ECC) memory, ensuring that continuous workflows operate without memory leaks or compute throttling under sustained high-concurrency pressure.

Why Are Mini PCs Popular for OpenClaw (And Where Do They Fail)?

The Appeal of Low Initial Costs and Desktop-Friendly Sizes

Small form factor devices have dominated early community discussions largely due to their incredibly low barrier to entry. They offer a desktop-friendly footprint and an attractive initial price tag, allowing small teams to spin up physical nodes quickly. For proof-of-concept projects, these compact machines provide a tangible way to run localized agents without significant upfront capital expenditure.

Hidden Bottlenecks: Thermal Throttling and I/O Limitations

Though they attract at first, small devices soon show clear body limits under steady business tasks. The tight case area always causes a heat slowdown. As the chips warm during heavy AI calculations, output falls sharply. Also, these side units face limited PCIe paths and input/output blocks. This makes them unable to manage the large network flow from a busy group of agents talking at the same time.

Why Do Enterprise Servers Outperform Edge Devices for AI Agents?

Unmatched Multi-Core Compute and Memory Bandwidth

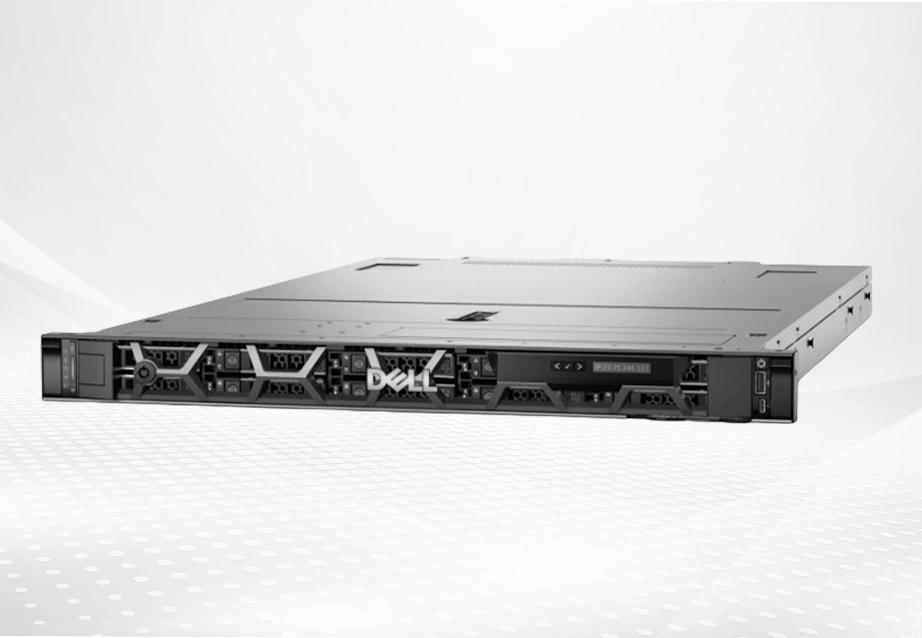

To truly unlock the potential of autonomous systems, raw computational power and memory bandwidth are non-negotiable. Enterprise hardware is specifically engineered to handle dense, parallel processing tasks. For instance, a platform like the Dell PowerEdge R650 delivers outstanding performance for the most demanding workloads. Supporting up to two 3rd Generation Intel Xeon Scalable processors with up to 40 cores per processor, it provides the massive multi-core compute necessary to process thousands of simultaneous agent requests effortlessly.

Ensuring Corporate Data Security and High Availability

Beyond sheer speed, enterprise servers provide the critical security infrastructure that consumer-grade devices entirely lack. Enterprise architectures integrate security deeply into every phase of the hardware lifecycle, from design to retirement. Features like cryptographically trusted booting and silicon root of trust ensure that corporate data remains impenetrable while maintaining the high availability required for mission-critical operations.

ROI Comparison: Mini PCs vs Enterprise Servers for OpenClaw

Initial Cost vs Long-Term Cost (TCO)

Business decision-makers often mistakenly prioritize initial hardware costs over long-term viability. While a fleet of edge devices might seem cheaper on day one, their rapid degradation under 24/7 load significantly inflates the Total Cost of Ownership (TCO) over a standard three-year cycle. Enterprise servers offset their higher initial price through superior longevity and energy efficiency.

Cost per AI Agent or Workload Unit

The real way to judge an AI setup is cost per task unit. By using a high-pack server, groups can share and run far more agents on one real machine. The HPE ProLiant DL385 Gen11 comes with AMD EPYC 9004 series processors that support up to 96 physical cores. This lets groups combine their tasks. As a result, it cuts the running cost of each single AI agent to a small part of what it costs on user hardware.

Downtime, Failure Risk, and Maintenance Costs

User hardware simply lacks design for steady, high-strength work. This leads to common part breakdowns. Business IT setup cuts these risks greatly. With easy-swap backup power units and forward heat control, business servers almost wipe out the chance of unplanned stops. This saves groups from the big money hit of stopped tasks.

Scaling Strategy: Add More Devices vs Upgrade Infrastructure

Trying to grow by always adding more low-level devices builds a broken, hard-to-run network layout. A much better growth plan means updating a strong setup that can take more load inside. Adding memory or using forward PCIe 5.0 add-on slots on a business server gives smooth growth. It does so without adding more real control points.

How to Choose the Right IT Infrastructure for OpenClaw Deployment

For Individual Developers and Small Teams

For lone coders checking basic code, usual desk machines or small real nodes stay useful. These setups allow cheap testing before locking into a bigger design change.

For Startups Scaling AI Agent Workloads

As startups move to handling real client data, the setup must change. Basic 1U rack servers give the right in-between spot. They offer needed business traits like ECC memory and far control without needing a huge data center space.

For Enterprises with High-Concurrency Requirements

Big tasks running thousands of side-by-side calls allow no room for mistakes. These groups must take multi-unit, high-density server racks with top network switches. This makes sure large data flows get sent right away. It also lets calculation power shift based on live needs.

Working with a Reliable IT Infrastructure Partner

Setting up a tough, strong base for auto agent rollouts needs more than buying hardware. It calls for a smart match with a skilled IT supplier. We at Huaying Hengtong grasp the details of business setup. Started in 2016, our firm runs a full product range with a broad reach. It covers servers, network items, and storage items from top world brands. With the main idea of putting customers first, we build custom fixes suited to your exact side-by-side needs. We have gathered solid field know-how in need checks, gear picks, and run upkeep. By teaming with us, you get top-quality items that meet your needs well. They also help your firm grow, keeping your rollouts safe and able to expand without limit.

FAQ

Q: What are the real OpenClaw system requirements for continuous enterprise workloads in 2026?

A: Steady business tasks need a solid design with multi-core processors, fast ECC memory, and backup cool systems. These details prove vital to stop the strong heat slowdown and memory blocks that often hit during long, side-by-side self tasks.

Q: Why do mini PCs struggle with OpenClaw hardware comparison benchmarks in production environments?

A: Though small devices work fine in short, local checks, they miss the PCIe flow and heat release needed for steady real work. Under long loads, they face big output drops. This makes them an unreliable measure for business-level checks.

Q: How does enterprise hardware improve the TCO for OpenClaw deployments compared to consumer devices?

A: Business hardware cuts Total Cost of Ownership a lot by lowering unplanned stops, stretching hardware life, and dropping upkeep costs. The skill to combine thousands of tasks on one trusty server costs far less over three years than often swapping out worn user devices.

Q: What is the most efficient scaling strategy when upgrading an OpenClaw IT infrastructure?

A: The best plan is up-and-side growth using business servers. Rather than building a messy net of many small devices, updating the memory and CPU power of a thick rack server lets groups handle big task rises without making network control harder.

Q: How can a high-concurrency infrastructure reduce the cost per AI agent unit?

A: High-side-by-side setups use strong, high-core processors that can share and run huge groups of agents at once. By making the most of the calculation pack per rack spot, groups spread the hardware cost over thousands of running agents. This makes the cost per unit very low.