Why Do OpenClaw Hardware Requirements Matter in 2026?

The Transition to Sovereign Local AI Agents

The artificial intelligence ecosystem is undergoing a radical shift. Enterprises are actively moving away from cloud-dependent APIs to deploy sovereign local AI agents. This transition ensures that highly sensitive corporate data remains within private network boundaries. However, achieving this level of digital autonomy transfers the immense computational burden of AI reasoning directly onto your local server infrastructure.

How Hardware Directly Dictates Agent Responsiveness

Software algorithms, no matter how advanced, are strictly bound by the physical limitations of their host environment. Running autonomous agents requires systems that can handle continuous, simultaneous tasks without dropping connections or throttling speeds. If the physical components fall short, the entire AI workflow experiences extreme latency. Understanding the exact specifications needed prevents bottlenecked response times and ensures that the infrastructure can sustain uninterrupted operations across multiple departments.

What Are the Minimum OpenClaw Specs for Basic Testing?

Baseline Components for Proof-of-Concept Deployments

When an IT department is just beginning to evaluate local AI automation, setting up a proof-of-concept environment is the first logical step. For a single agent operating on a limited dataset, the minimum entry point typically involves a standard multi-core processor and around 16GB of Error-Correcting Code (ECC) RAM. This baseline configuration provides just enough computational breathing room to initialize the software, load a highly quantized language model, and execute sequential, low-complexity tasks.

The Hidden Risks of Operating on Minimum Thresholds

The basic level lets the software start up. However, it does not work well for real use. Running at the lowest point brings serious problems. When the agent tries a tough question or checks a big file, errors like Out-of-Memory (OOM) often appear. Plus, working near full capacity wears out the hardware faster. It causes heat issues that slow things down. This leads to a poor experience for users.

What Are the Recommended Hardware Specs for Enterprise Production?

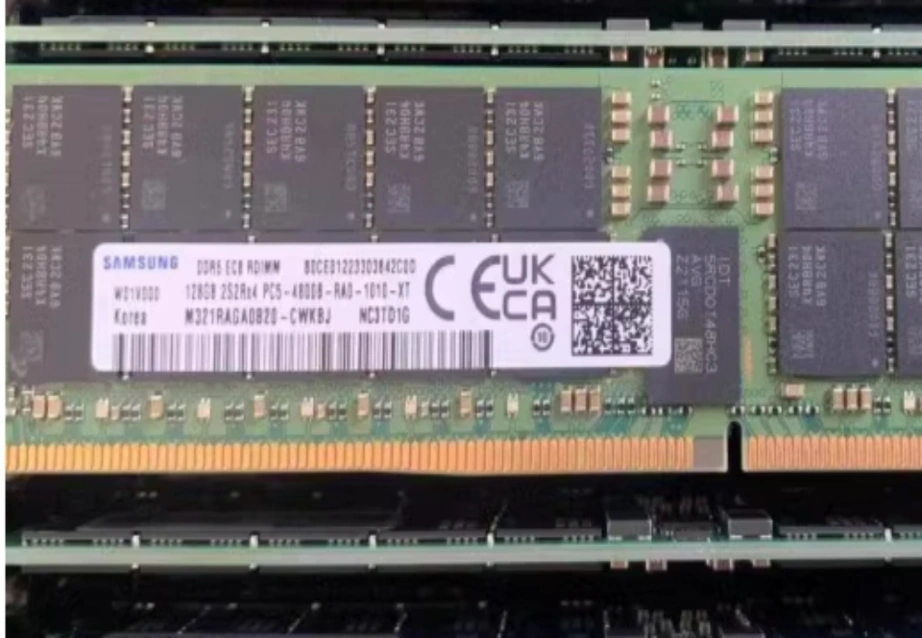

Optimizing Memory Capacity for Complex Context Windows

In a commercial setting, memory is the most critical factor for multi-agent workflows. Agents require massive, high-speed RAM to keep expansive context windows active without constantly swapping data to the hard drive. For enterprise production, starting with 64GB to 128GB of high-frequency DDR5 memory is the standard. Equipping your server with modules like Samsung M321RAGA0B20-CWK ensures that concurrent processes have the massive bandwidth required to operate fluidly without hitting fatal memory limitations.

Accelerating Inference with Advanced Compute Power

A high-core CPU is essential for handling logical routing, background scripts, and API management. However, generating AI responses requires heavy parallel computing. The recommended production setup pairs a robust enterprise CPU with dedicated professional GPUs. Utilizing a platform like the HPE ProLiant DL380 Gen11 provides the necessary dual-socket power and PCIe expansion slots to house advanced graphics cards, dramatically accelerating inference times and reducing task queuing delays.

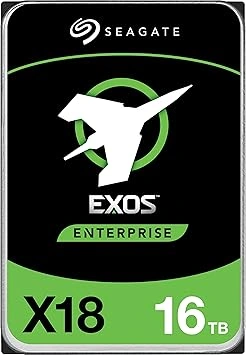

Utilizing High-Speed Storage for Persistent Agent Memory

Sophisticated AI agents use lasting memory to remember past talks. They also access large vector databases. Regular drives fail to match the steady read and write needs for these records. High-quality storage options, such as the Сигейт ST16000NM004J enterprise drive, ensure quick access to old data. This stops small pauses. Those pauses happen when an AI reaches into its memory storage.

Minimum vs Recommended: Which Infrastructure Fits Your Business?

Evaluating Concurrency and Daily Task Volumes

The decision between baseline and recommended tiers boils down to task volume. Small teams conducting isolated research may survive on a heavily optimized 1U entry-level server. However, if your business expects to run dozens of AI agents concurrently—managing customer service, data scraping, and internal reporting simultaneously—you must adopt the recommended enterprise tier to prevent complete network gridlock.

Future-Proofing Against Rapid AI Model Expansion

Open-source AI models keep growing in size and ability. Buying gear that just hits current basic needs means it will soon become outdated. Investing in the suggested specs helps. Focus on servers with new PCIe standards and room for memory upgrades. This way, IT leaders safeguard their spending. They also prepare for the stronger needs of future AI models.

How to Ensure Reliable Procurement of AI Server Hardware?

Navigating the Complex Supply Chain of Enterprise IT

Finding real, business-level IT gear takes effort. The market has many options with different setups. Picking the wrong mix of processors, memory, and network tools can ruin an AI project. Groups require a clear buying process. It must confirm that parts fit together and come with real guarantees.

Empowering Your Infrastructure with Huaying Hengtong

Securing the right hardware requires an experienced IT supply chain partner. At Huaying Hengtong, we specialize in providing comprehensive IT solutions to clients worldwide. While we do not manufacture the equipment, we operate a rich, multi-brand product line featuring authentic servers, storage systems, and switches from the world’s leading technology brands. Based on our core concept of customer-centricity, our team leverages extensive industry experience in equipment selection to help you confidently transition from minimum testing environments to fully recommended, high-performance AI infrastructure.

Часто задаваемые вопросы

Q: What are the absolute minimum OpenClaw hardware requirements for 2026 deployments?

A: To simply launch the platform and run a single, basic agent for testing purposes, you will need a modern multi-core CPU and at least 16GB of ECC RAM. However, this minimum specification will struggle significantly with concurrent tasks or large context windows.

Q: Why is the recommended RAM in OpenClaw hardware requirements so high?

A: Local AI agents must load complete language models directly into the system’s memory while simultaneously keeping active workspaces open for ongoing reasoning. High-capacity RAM prevents the system from crashing or slowing down when processing complex, multi-step queries.

Q: Does storage speed impact OpenClaw hardware requirements for local deployments?

A: Yes, storage speed is highly critical. AI agents constantly read and write interaction logs to maintain persistent memory. Using high-speed enterprise drives prevents data transfer bottlenecks, allowing the AI to recall historical context instantly without causing workflow delays.

Q: How do I choose between the minimum vs recommended specs for OpenClaw?

A: The choice depends entirely on your daily concurrency volume. If you are a solo developer testing basic scripts, minimum specs may suffice. For organizations running multiple agents across different departments 24/7, investing in the recommended enterprise-tier hardware is mandatory to ensure stability.

Q: Can standard desktop processors meet OpenClaw hardware requirements for AI workflows?

A: Standard desktop processors can hit the basic level for early tests. But for ongoing business tasks, server processors are needed. They offer many PCIe paths, large ECC memory support, and heat resistance for round-the-clock AI jobs.